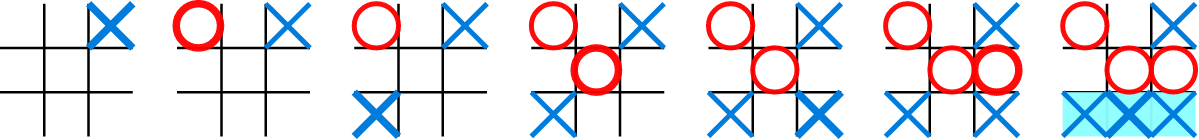

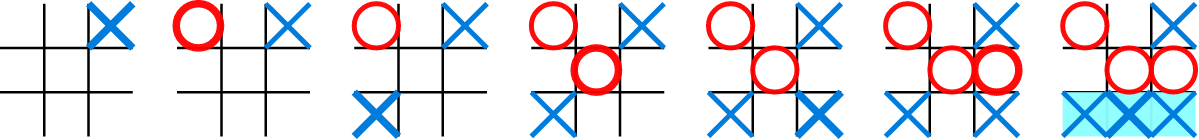

A game of Tic Tac Toe (from [1])

| x0 | x1 | x2 |

| x3 | x4 | x5 |

| x6 | x7 | x8 |

>>> import numpy >>> A = numpy.loadtxt('tictac_final.txt') >>> X = A[:,:9] # Input features >>> y = A[:,9:] # Output labelsAssignment requirements

- Report performance of these classifiers on the above two classification datasets: linear SVM, k-nearest neighbors, and multilayer perceptron. We have included links to the relevant classes in scikit-learn, in case you are using Python. You can manually choose the number of neighbors k, and the architecture of your multilayer perceptron, although be aware this introduces some overfitting risk. You should use k-fold cross validation (using e.g. 10 folds).

Please write a single classifier program that outputs the statistical accuracy and confusion matrices for both datasets and for each of these classifiers. Please normalize the confusion matrices so that each row sums to one. Note that none of the datasets are randomly shuffled so you should randomly shuffle the order of the dataset before using cross-validation.

- Run these regressors on the intermediate boards optimal play (multi label) dataset: k-nearest neighbors, linear regression, and multilayer perceptron. For linear regression, implement the solution yourself via the normal equations derived in class. Note that one linear regressor can be trained independently for each output. For simplicity, you can use a squared loss function.

Write a single regressor program which evaluates each of these regressors. Since this is really a multi-label classification problem that is being treated as a regression problem, please report the accuracy of the multi-label regressor as if it were a classifier, by rounding the output of each regression output to either 0 or 1 and comparing with the testing data. Use k-fold cross-validation also when assessing the accuracy for this part.

- Make a writeup, where you report the accuracy and normalized confusion matrices for the 3 classifiers, and the accuracy for the 3 regressors. Explain which method worked best for classification and for regression, and why. Investigate (and report) what happens to the accuracy of the classifiers if they are trained on 1/10 as much data, or a substantial fraction of the ground truth labels are corrupted by random noise (this could be a simple way to model the inclusion of non-optimal gameplay in the training set). Explain why certain methods scale better to larger datasets than the others. Hint: the multilayer perceptron should work better than k-nearest neighbors regressors, but they both should have above 80% accuracy, and should play a decent game of Tic Tac Toe in the next step.

- Implement a simple command-line (or Web-based) Tic Tac Toe game where a human (player X, i.e. +1) can play the best machine learning model (player O, i.e. -1). You can implement the computer player as follows: suppose the computer is given a current board. Then find the output of the regressor that is highest for the given board, which is not already taken by another player's move, and make the computer move that corresponds to the choice most preferred by the model. You can use the intermediate play classifier for the entire game. Describe in your writeup whether you can beat the computer, and how hard this is for the three regressors.

- Optional extra credit: the above machine learning setup requires an expert human or computer player to observe and learn in a supervised manner from. You can explore the use of alternative techniques such as genetic algorithms.

Clarifications

yourname_project1.zip. Please include programs that will automatically print the above requirements for the classifiers, the regressors, and a program that implements the Tic Tac Toe gameplay with a human.