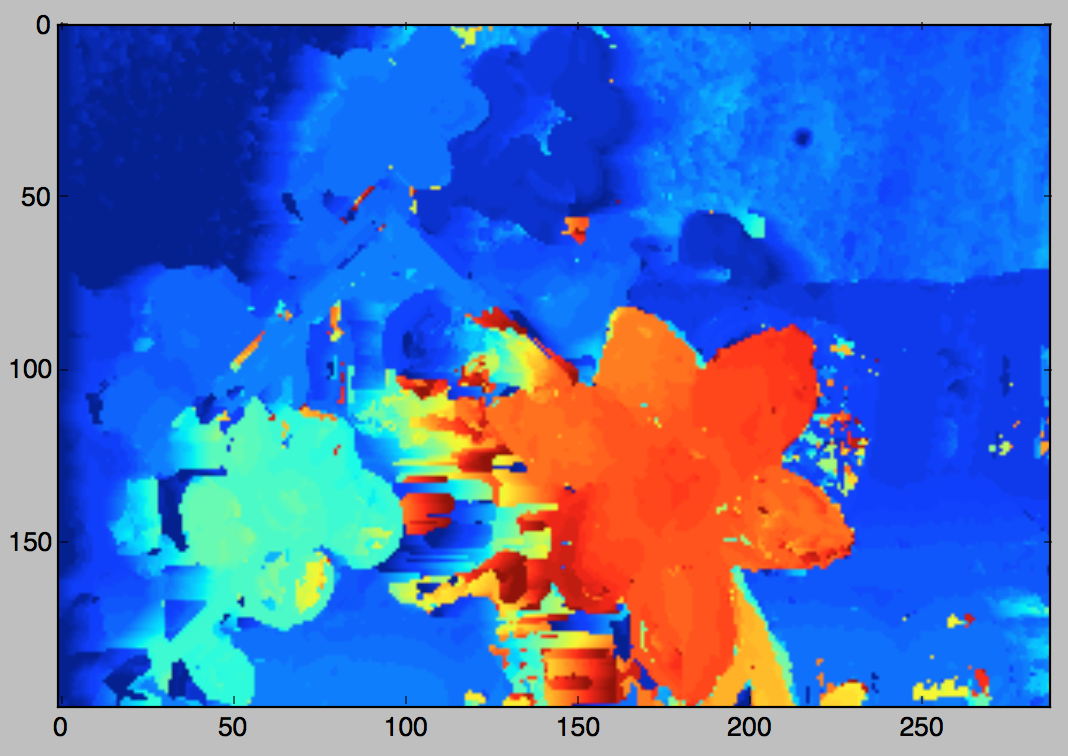

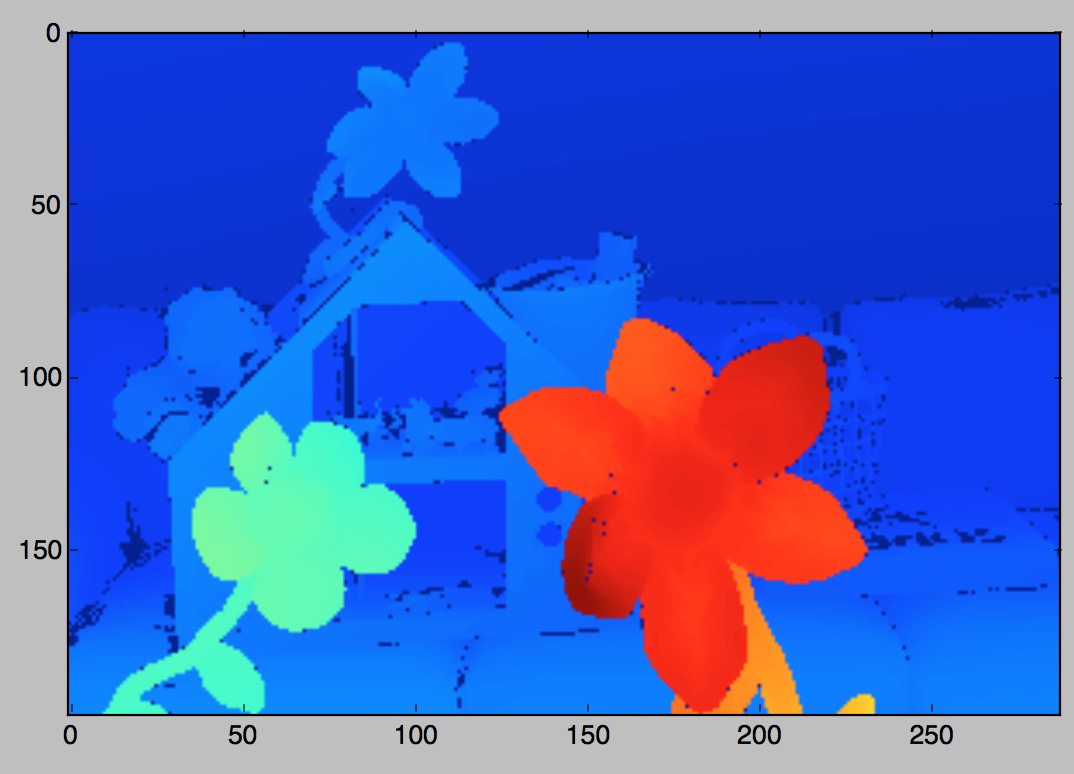

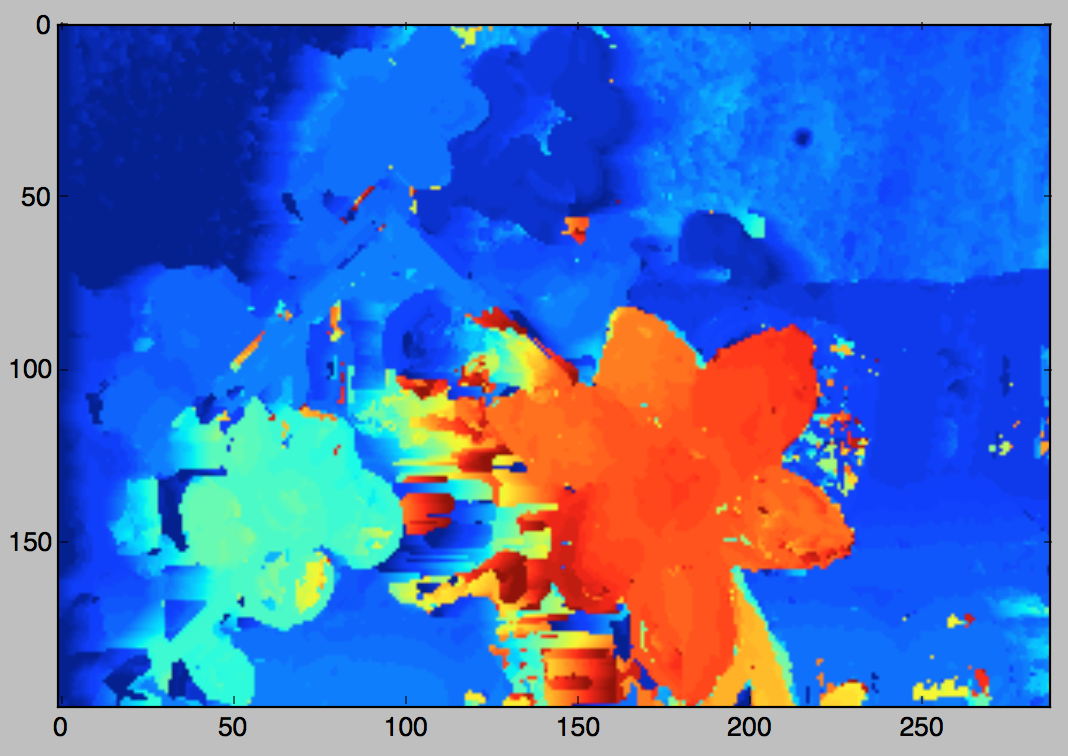

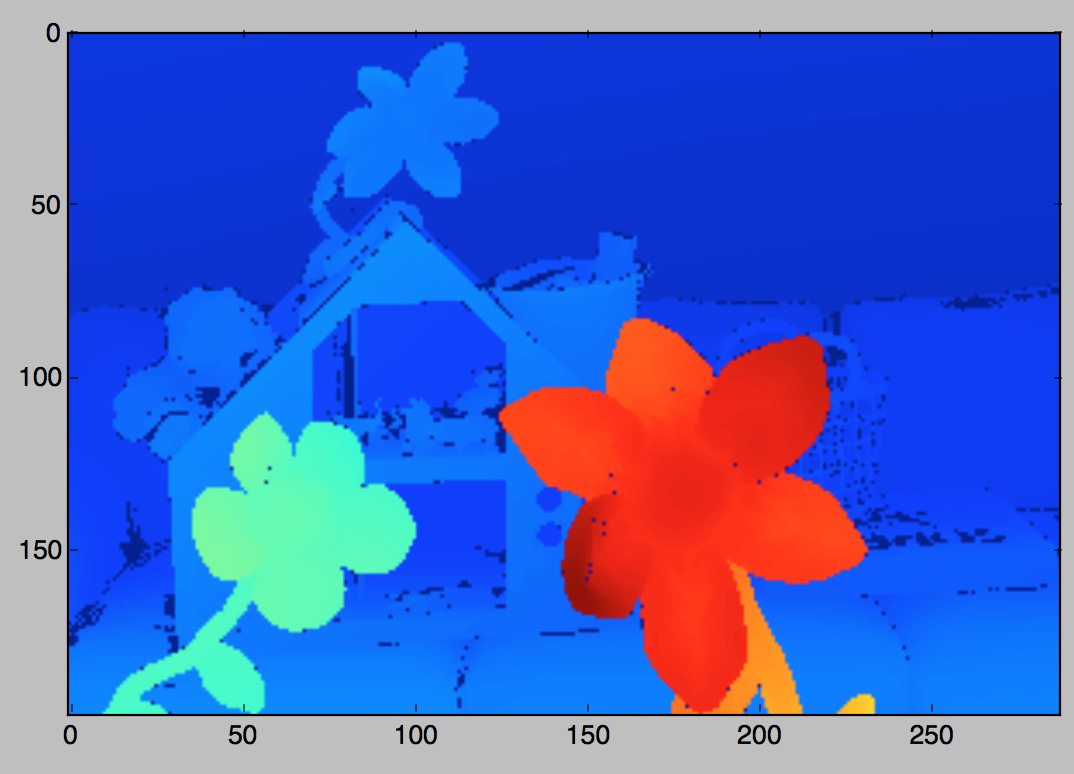

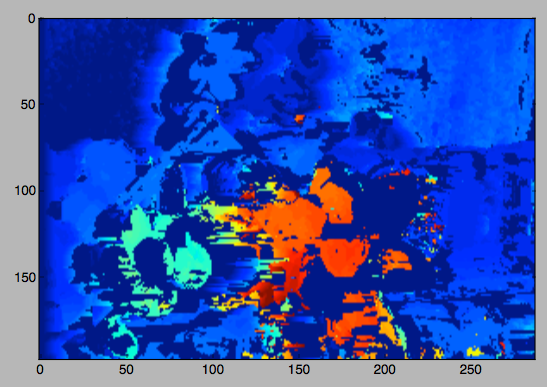

From left to right: left image, right image, disparities from simple stereo matching, ground truth.

import cv2 from an interactive Python prompt.

numpy.load to load the previous ground truth .npy file). Print out the root-mean-square (RMS) distance between the ground truth disparity and your disparity. Experiment with changing the σ of the Gaussian filter, and report in the readme submitted with your assignment the least RMS distance that you can obtain by using Gaussian filtering. Also include an image visualizing the stereo matching for the Gaussian filter.cv2.ximgproc.jointBilateralFilter(joint, src, d, sigmaColor, sigmaSpace) -> result image (see also the C++ OpenCV documentation for this function). The joint bilateral filter blurs out a source image src (in this case, this can be the slice at each disparity d through the disparity space image) so that the blur does not cross edges in the joint image joint (in our case, this can just be the left color image). Experiment with sigmaColor and sigmaSpace to see if you can obtain a better result than Gaussian filter in terms of the RMS distance between your result and the ground truth. Report in your readme the least RMS distance from the ground truth that you can obtain by bilateral filtering. Include a visualization of the stereo matching. Hint: the joint bilateral filter prefers a greyscale joint image in float32 with a maximum value not exceeding 1.

|  |  |

cv2.imread().sift should be changed to: sift = cv2.xfeatures2d.SIFT_create() for OpenCV version 3+. You can check your OpenCV version by printing cv2.__version__ in Python).

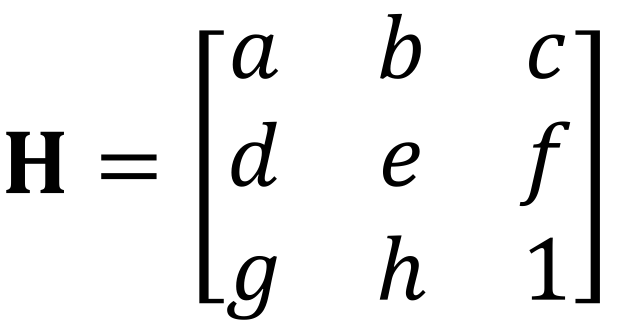

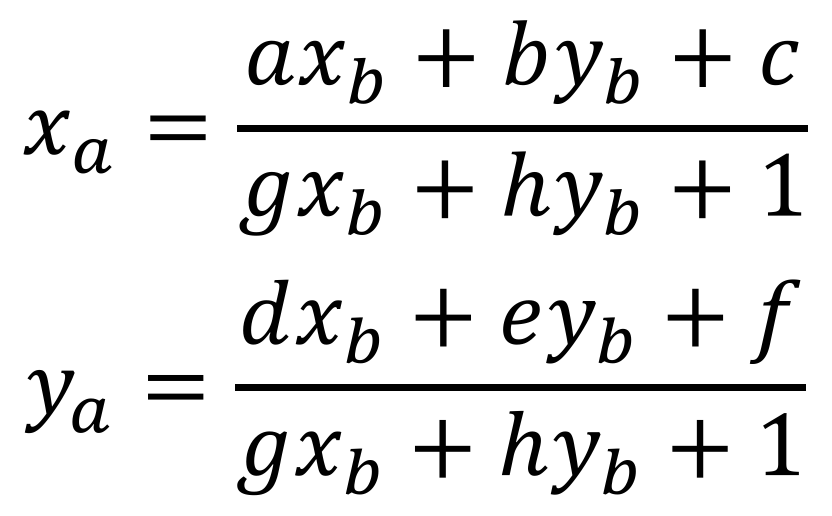

| (1) |

numpy.zeros, and populate these arrays by a single loop through all four points, with two rows (corresponding to two equations) populated for each point. This will avoid an unnecessary explosion of code/algebra.i can be extracted with (key_list[i].pt[0], key_list[i].pt[1]).import skimage, skimage.transform, numpy, numpy.linalg def composite_warped(a, b, H): "Warp images a and b to a's coordinate system using the homography H which maps b coordinates to a coordinates." out_shape = (a.shape[0], 2*a.shape[1]) # Output image (height, width) p = skimage.transform.ProjectiveTransform(numpy.linalg.inv(H)) # Inverse of homography (used for inverse warping) bwarp = skimage.transform.warp(b, p, output_shape=out_shape) # Inverse warp b to a coords bvalid = numpy.zeros(b.shape, 'uint8') # Establish a region of interior pixels in b bvalid[1:-1,1:-1,:] = 255 bmask = skimage.transform.warp(bvalid, p, output_shape=out_shape) # Inverse warp interior pixel region to a coords apad = numpy.hstack((skimage.img_as_float(a), numpy.zeros(a.shape))) # Pad a with black pixels on the right return skimage.img_as_ubyte(numpy.where(bmask==1.0, bwarp, apad)) # Select either bwarp or apad based on mask

composite_warped to use linear blending to smoothly interpolate each pixel's color between the colors drawn from a's image and the corresponding colors drawn from the warped b image (bwarp). In particular, you can determine the distances to the region where only a pixels exist, and the region where only b pixels exist using a distance transform, and use these distances to make a smooth interpolation.

yourname_project2.zip. Please include your source code as .py files, the results of stereo matching for the above image pair for (a) Gaussian filtering, (b) bilateral filtering, and (c) after rejecting occluded/mismatched regions. Please also include a readme describing how to run your code from the command-line, and the RMS distances to the ground truth for scenarios (a-c) listed in the previous sentence. In addition, please include the best homography matrix found by RANSAC and the composite panorama image.